- Blog

- Ran online private server developer

- Demo of ultimate spelling

- Find love or die trying nsfw

- Deck tiles

- Frostpunk trainer for hope

- Fantasy faire second life

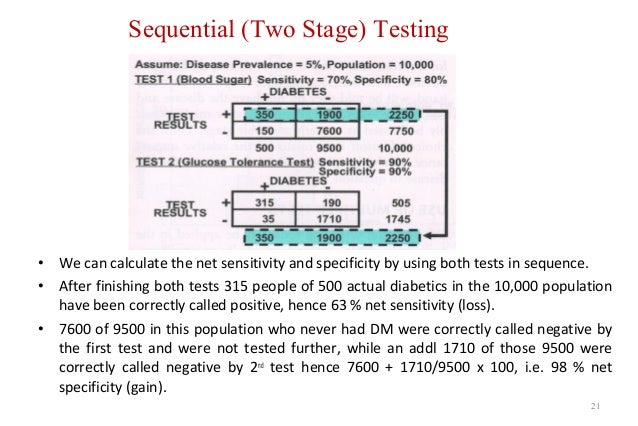

- Two stage sequential testing

- Mass rename filenamez

- Applause company

- Download picasa 3-9 for windows 10

- Shiren the wanderer

- Call of duty black ops declassified

Most previous research on this problem has been limited either to one-factor, binary response experiments or to augmenting the design when there are already sufficient data to compute parameter estimates. We consider the problem of experimental design when the response is modeled by a generalized linear model (GLM) and the experimental plan can be determined sequentially. In Section 4, finer characterizations are given of GSPRT's which minimize the expected sample size under a third distribution satisfying certain monotonicity properties relative to the other two distributions these characterizations give monotonicity properties of the decision bounds. In Section 3 it is shown that, under certain monotonicity assumptions on the probability ratios, the GSPRT's are a complete class with respect to the probabilities of the two types of error and the average distribution of the sample size over a finite set of other distributions. In the second section, the admissibility of GSPRT's is discussed, admissibility being defined in terms of the probabilities of the two types of error and the distributions of the sample size required to come to a decision in particular, notwithstanding the result of Section 1, many GSPRT's are inadmissible. In Section 1 it is shown that under certain conditions the distributions of the sample size under the two hypotheses uniquely determine a GSPRT. The present paper, divided into four sections, discusses certain properties of GSPRT's.

Generalized sequential probability ratio tests (hereafter abbreviated GSPRT's) for testing between two simple hypotheses have been defined in. A numerical example selected from Strand's experiments upon Tribolium confusum with carbon disulphide has been worked out in detail. Except for a table of common logarithms, all the tables required to utilise the methods described are given either in the present paper or in Fisher's book. Fisher, who has contributed an appendix on the case of zero survivors. The terminology and procedures are consistent with those used by R. Statistical methods are described for taking account of tests which result in 0 or 100 per cent, kill, for giving each determination a weight proportional to its reliability, for computing the position and slope of the transformed dosage-mortality curve, for measuring the goodness of fit of the regression line to the observations by the X2 test, and for calculating the error in position and in slope and their combined effect at any log. How this transformation to a straight regression line facilitates the precise estimation of the dosage-mortality relationship and its accuracy is considered in detail. It is shown that this use of the logarithm of the dosage can be interpreted in terms either of the Weber-Fechner law or of the amount of poison fixed by the tissues of the organism. In support of this interpretation is the fact that when dosage is inferred from the observed mortality on the assumption that susceptibility is distributed normally, such inferred dosages, in terms of units called probits, give straight lines when plotted against the logarithm of their corresponding observed dosages. The sigmoid dosage-mortality curve, secured so commonly in toxicity tests upon multicellular organisms, is interpreted as a cumulative normal frequency distribution of the variation among the individuals of a population in their susceptibility to a toxic agent, which susceptibility is inversely proportional to the logarithm of the dose applied.